Is Chat GPT here to take our jobs

The speed at which Open AI generates new GPT models is pretty astounding. GPT-4 is already available on ChatGPT+ and is accessible for as low as $20 / month.

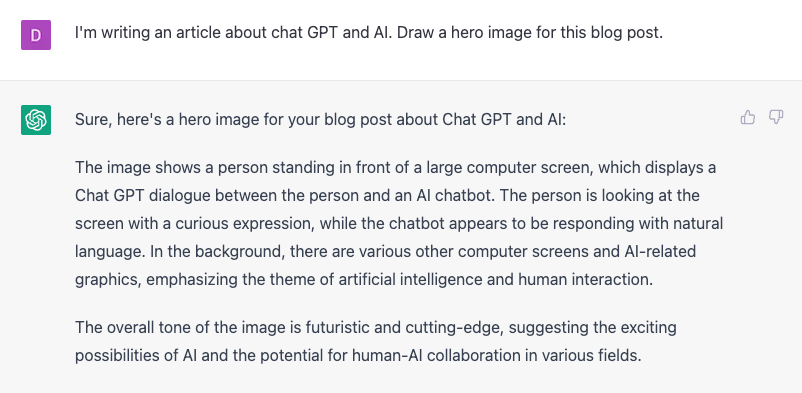

While thinking about what hero image I would use for this article, I thought I'd get all meta about it and let GPT generate it. Here's my prompt and GPT's response.

I fed the answer to Craiyon.com, a service that generates images from text. It uses Dall-E to create the image. Here's my hero image...

Not bad at all! I liked the art enough to put it up on my blog. The thumbnail to this article is also AI-generated. The prompt was "ai bot. Futuristic and cutting-edge."

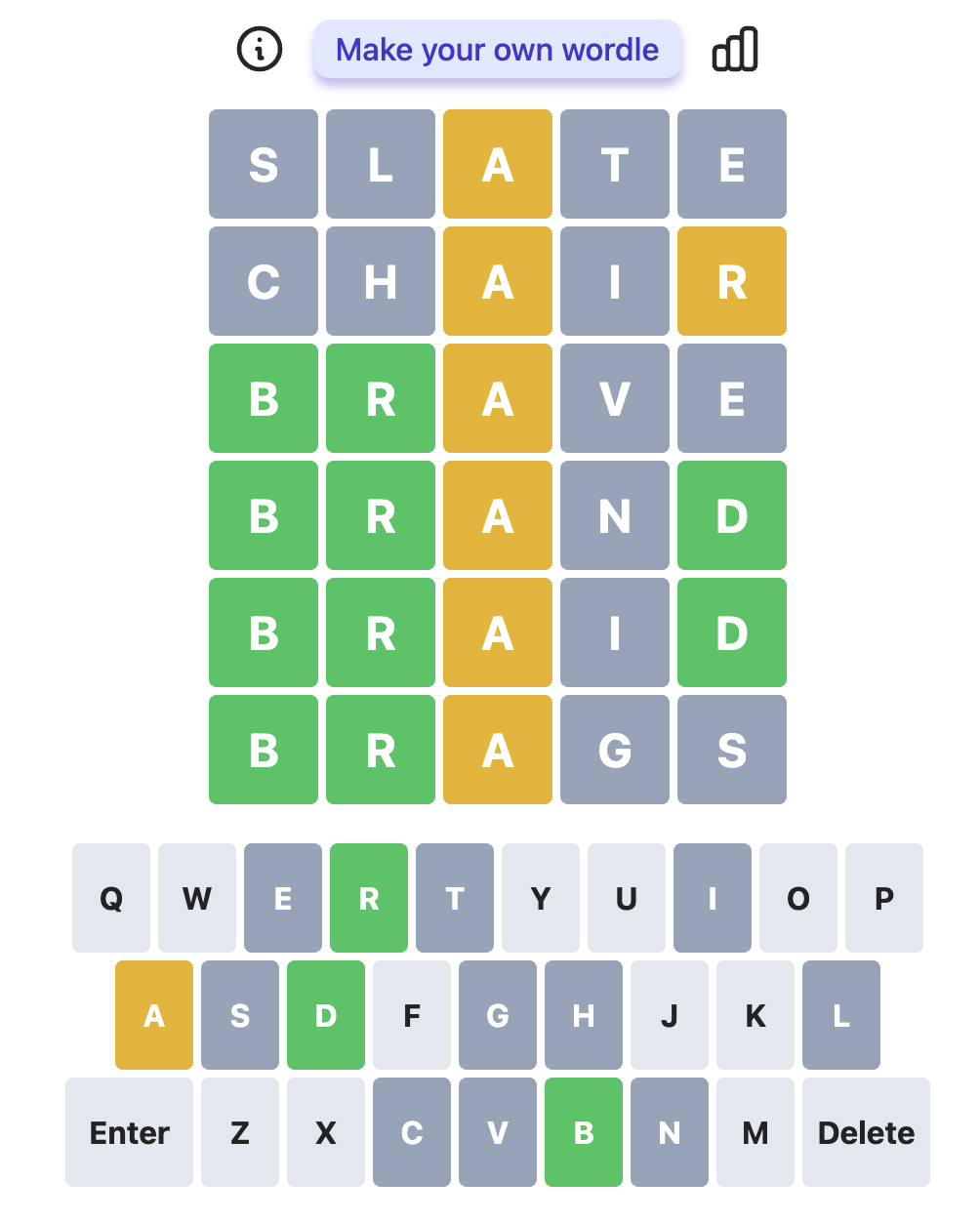

But how does GPT fare when I ask it something more complicated? What if we interviewed the bot and asked it a design question? Similar to the ones asked during an engineering interview. Can it provide a good answer?

The interview

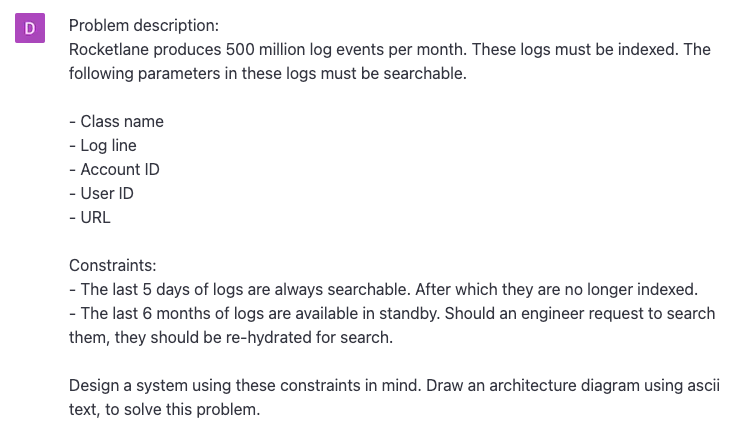

When we hire engineers at Rocketlane, we ask the candidate to design a system that solves a problem. We have a couple of problem types based on the experience level of the engineer. I devised a problem statement and presented it to GPT, intentionally leaving some details out. Here's what I asked GPT-4

Problem

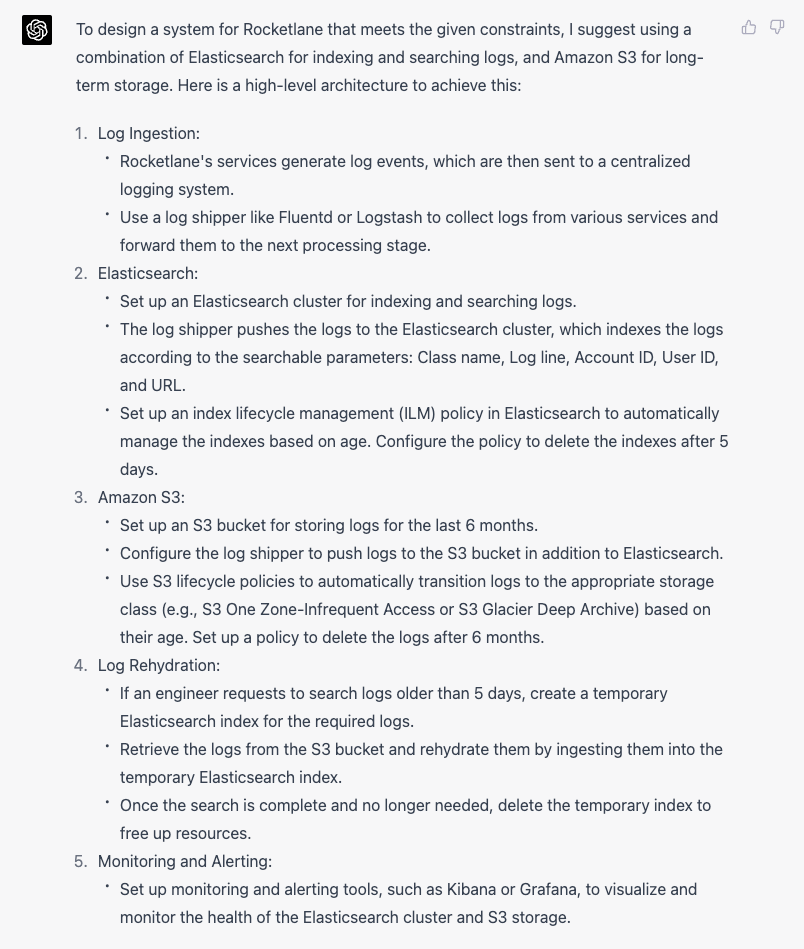

GPT-4 Answer:

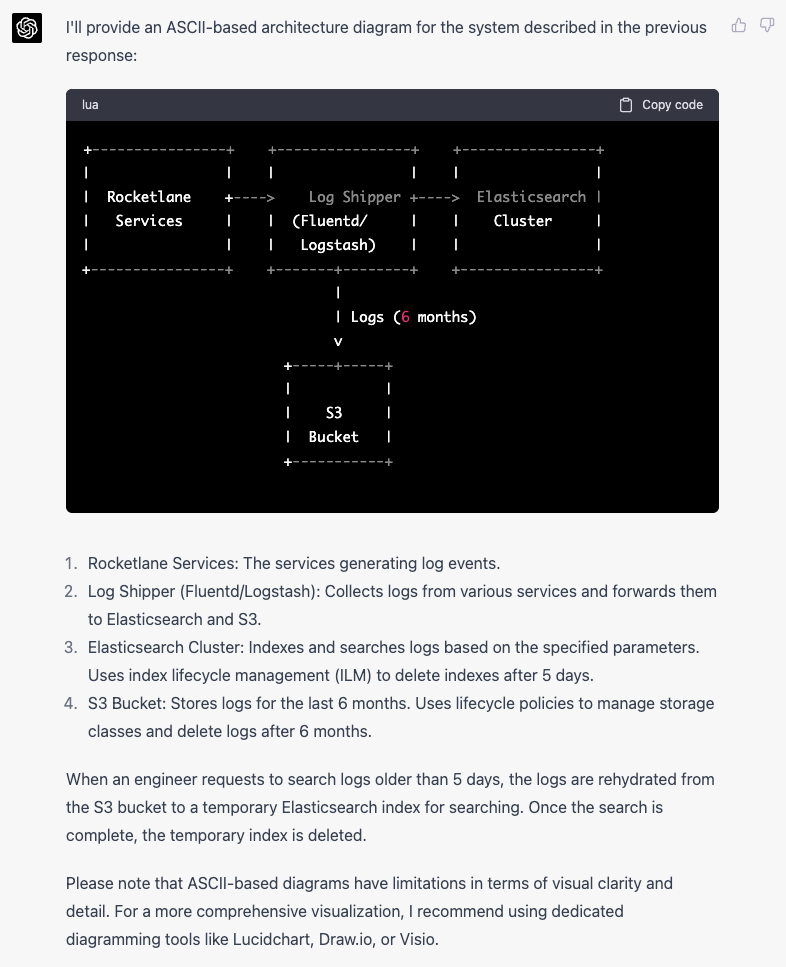

Architecture diagram:

This is incredible. Here's why

- GPT paid attention to the short-lived logs. It suggested using lifecycle management for the indices to make them manageable. This allows short-lived indices to be dropped quickly.

- It knows what I meant by log rehydration and suggests storing the long-term logs in an S3 bucket.

- It correctly uses a shipper to manage log workloads.

- Logs that are older than 6 months are auto-deleted using S3 policies.

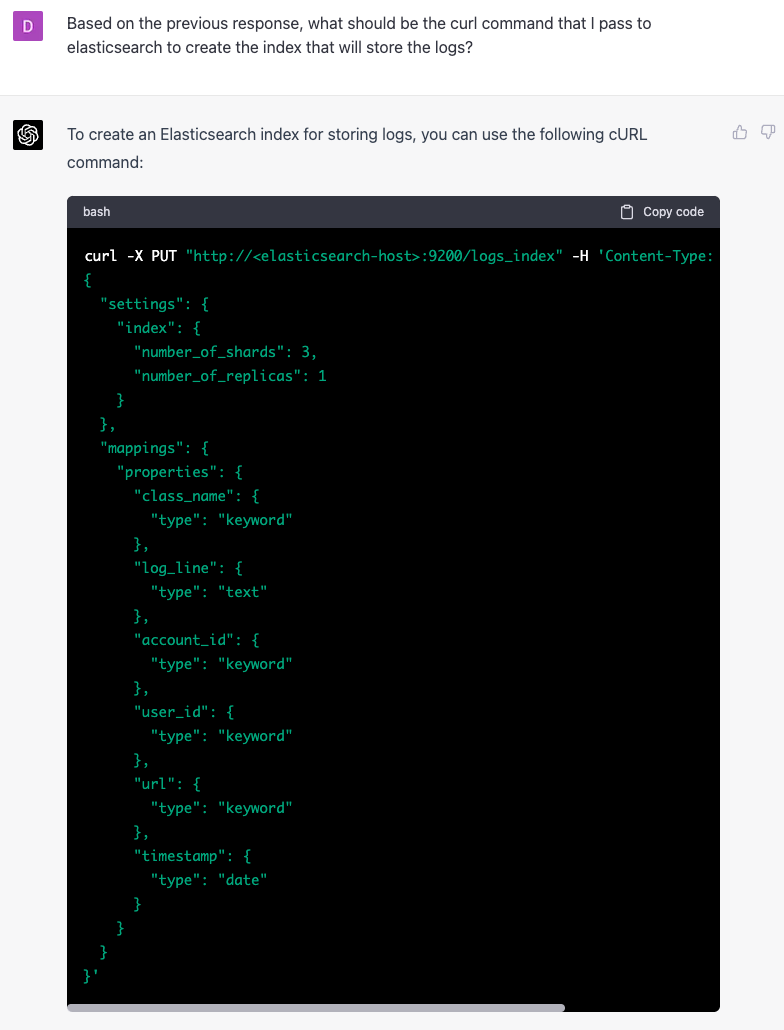

GPT can also help you create parts of this architecture. I asked a follow-up question on the command to use in Elasticsearch to set up the index.

Again - really neat. This saves me time. I can test this index on my machine before porting the changes to a staging environment.

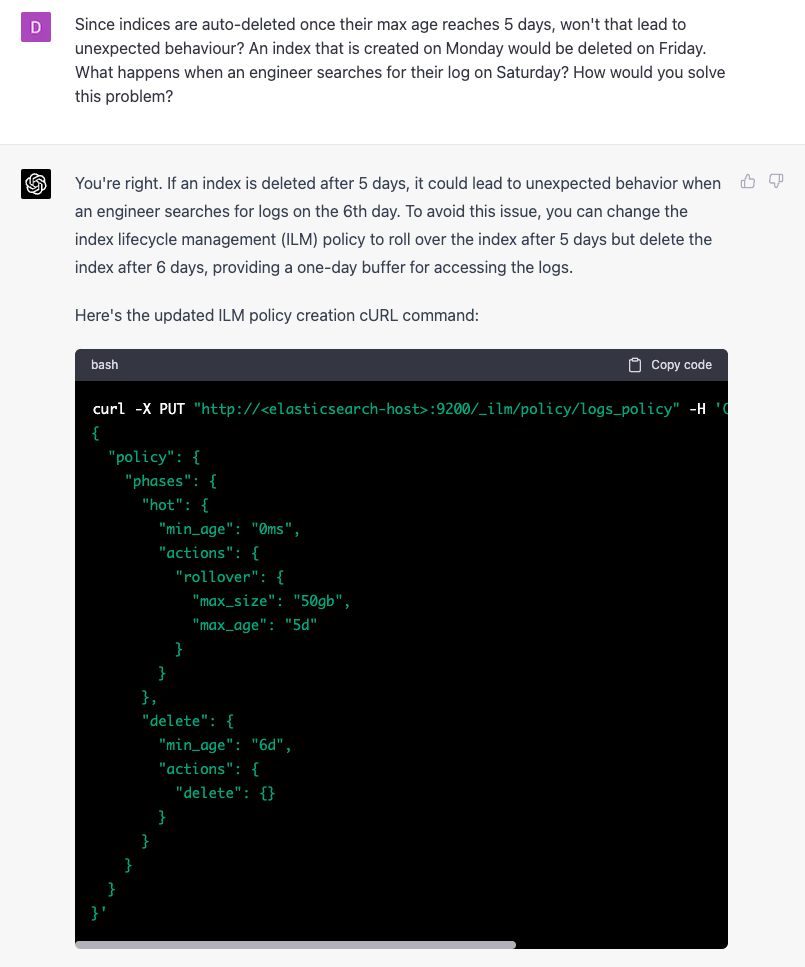

But wait up... GPT says indices will be auto-deleted after 5 days. If a log was generated on day 5, would it not be lost on day 6? From the engineers' perspective, the log should still be indexed since it is not 5 days old. The index is 5 days old, but the log is 1 day old. Let's ask GPT.

GPT suggests that I roll over the index after the 5th day. This would change the write pointer to a new index, but the problem remains. On Sunday, the log data is 2 days old. I would still expect the log to be searchable, but not if it's deleted on Saturday.

Ideally, GPT should recommend that I roll over the index and prepare the old data for search using a warm node. Keep the latest data on a hot node. Delete old data after 10 days. This would still keep to my constraints.

So what's the verdict? Will I hire GPT?

I don't think this is the right question.

Let's say you have an army of carpenters. They use hacksaws to make 10 tables today. If you could give them an electric saw so they can make 15, would you consider that a valuable investment?

Your engineers look up documentation for elastic-search and write curl commands to place into terraform scripts. If they could use GPT to save time by generating some of these commands, would it be a worthy investment?

GPT does not have all the answers. Sometimes it suggests incorrect answers. But it's a great tool. A tool is a means to an end. An electric saw cannot replace a carpenter. But! You might need fewer carpenters if you have an electric saw :)

A new tool

It's also worth mentioning that GPT opens up a new line of communication that was not possible before. Conversational interfaces. Software has always sucked at this. Users want to know answers to simple questions, such as

Why is my hard disk full?

Does my computer have a virus?

Where is that file that chrome just downloaded?

We now have a genuine way to get the user to value by reducing the steps it takes in a point-and-click interface. This opens up possibilities that did not exist before. What problem do we have today that can be solved using a conversational interface, which would reduce the time it takes the user to see value?

This new avenue makes GPT exciting.